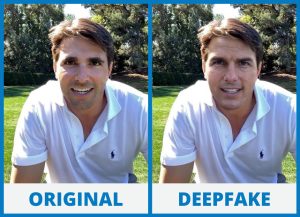

Deepfakes are an AI-powered technology that takes images, video, or audio of a person and generates a new file with an artificial likeness of them. Anyone can then use these files. With the right software, you can create videos or fake digital media files And spread them without the consent of the individual they’re based on. Unfortunately, deepfakes are incredibly difficult to identify as fakes without technological assistance.

However, there are technologies in development that can detect these deepfake files so that they don’t have negative effects on their subject.

How are deepfakes used?

Deepfakes are used to create realistic fake videos and images.

There are two main components to these deepfake videos or images. The first is the component that takes a real-world person’s face, voice, or footage. And edits it to place it into the video or image. The second is an algorithm that actually generates the video that places someone else in it.

The technology behind deepfakes originated from Ian Goodfellow. He is a researcher at Google Brain who published a paper titled “Generative Adversarial Networks” in 2014.

Deepfakes can be used for a wide range of different purposes. Such as videos that fake celebrity porn, fake celebrities, and even fake news and fake Twitter accounts.

As of right now the best tool to create deepfakes is Fake app. And it’s been adapted to work with the major video editing tools such as Adobe Premiere Pro, Final Cut Pro X, or Windows Movie Maker. The program takes an input video and applies the deepfake onto it. Since it has become more well-known many people have created tutorials for making them. There are also lots of apps that you can download onto your phone or computer that will edit your face onto someone else’s face or body.

These faking videos usually use motion capture to track a person’s body movements. But that makes the deepfake look unnatural and not realistic. A new method for style transfer uses live footage of someone’s face, along with details of their voice. It has more natural results because it uses real footage, but it still looks fake.

Why are they bad?

The main issue with deepfakes is that they are almost completely indistinguishable from the real thing. In many cases, if you can’t tell a video or image has been altered, the video or image becomes worthless. Even more concerning is the fact that AI-generated content is becoming more realistic every day and it doesn’t seem like this trend will be slowing down anytime soon.

The fact that these deepfake videos and images are so difficult to detect as fakes have also led to their use for nefarious purposes. Their ability to spread without consent has already caused serious issues on YouTube. They need to be manually approved by the platform for them to be accessible.

Also, deepfakes are being misused to make deepfake pornography. Despite not being a new concept, deepfake pornography has become more prevalent since the technology has become more widely available.

ASUS donated money to a research group at Stanford that is creating an AI algorithm that can detect deepfake videos. Having this capability will be critical for the future of video editing, as it will protect victims from inappropriate use of their images and the media from misinformation spread by these videos. This technology will definitely come in handy to combat the rise of deepfake pornography too.

Deepfake for propaganda?

Deepfakes are mainly used to create pornography or infidelity in relationships. It is also possible to use them for propaganda and slander. This makes deepfakes a dangerous weapon for people with malicious intent.

It is possible to use deepfakes to spread fake news, making them hazardous for the public. Using deepfakes, you can steal the photo of someone and use it in a negative way. One example is the deepfake video “Donald Trump joins Breaking Bad” or “Nancy Pelosi saying slurs”. Or a deepfake video of someone who resembles a woman saying vulgar or sexist comments that would make her look bad.

It is possible using them to slander someone’s reputation or character by creating fake videos or images of them performing a bad act. Hell, it could even be something embarrassing like farting. But for most people, it would just destroy their reputation and make them look like bad people in general.

Deepfakes are very hard to detect because it takes a lot of work for the algorithm to break down the real footage into a deepfake video. You also can’t tell that they’re fake unless you know what to look for, like if they don’t have any audio. Even though it’s hard to detect fakes, people are still trying to find ways to detect them. One way is by using facial recognition on videos and images. And another way is using AI software such as Face++.

One of the main problems of deepfakes is that we don’t know who made them.

However, the future looks bright for deepfakes, either for good or for worse.

Although there are many issues with this technology, there are many reasons to be optimistic about its future as well.

Also read: AI: Latest Innovations In Artificial Intelligence